Most analytics stacks break the moment a business moves on-chain. Traditional BI assumes stable schemas, account-based identity, and data that arrives in controlled batches. Blockchain systems don’t. They generate immutable event streams, fragment state across protocols and layers, and identify users through wallets rather than clean customer records.

That’s why Enterprise Blockchain Analytics: Complete Guide (2026) matters for CTOs, product leaders, compliance teams, and Web3 founders building real systems instead of public dashboards. The problem isn’t access to data. It’s turning raw on-chain activity into decision-grade intelligence for fraud controls, token design, governance, reporting, and operations.

The market signal is already clear. In India, the blockchain market is projected to grow at a CAGR of 87.3% from 2023 to 2030, reaching $6.12 billion by 2030, with enterprise applications making up over 60% of deployments in finance and supply chain, according to PixelPlex’s blockchain statistics and trends analysis. For a CTO, that changes the question from “Should we analyse blockchain data?” to “What architecture gives us reliable, compliant, real-time insight across on-chain and off-chain systems?”

The Rise of Enterprise Blockchain Analytics

Enterprise blockchain analytics has moved from experimental reporting to core infrastructure. The shift is visible in regulated payment pilots, tokenised asset programs, and multi-chain products that now generate business events outside the databases an enterprise owns. For a CTO, the implication is straightforward. On-chain data now affects revenue recognition, fraud controls, treasury visibility, compliance review, and customer intelligence.

Why enterprise demand accelerated

The market changed because blockchain moved into operating environments with audit requirements, internal controls, and service-level expectations. Public dashboards are useful for traders watching a token or protocol. They are inadequate for an enterprise trying to reconcile wallet activity to customers, investigate suspicious flows, or explain a KPI to finance and legal.

That gap is architectural, not cosmetic.

An enterprise analytics team has to join ledger events with off-chain context such as customer accounts, case records, payment references, sanctions screening results, and product telemetry. It also has to support permission boundaries. Some data is public by design. Some sits in private ledgers, custody systems, internal warehouses, or compliance tools. The analytical value comes from connecting those layers without breaking governance rules.

Why decentralised systems create a different analytics problem

Conventional BI tools were built for systems with controlled schemas and business identifiers. Enterprise blockchain analytics starts earlier in the stack. It has to ingest chain data reliably, decode contract interactions, resolve token and protocol metadata, and map wallet activity to internal entities before standard reporting becomes useful.

Three implementation pressures drive adoption:

- Cross-network activity creates blind spots unless teams normalise events across L1s, L2s, sidechains, bridges, and enterprise systems.

- Identity resolution requires address clustering, application-level attribution, and careful handling of false positives, especially in regulated environments.

- Operational latency matters because fraud, liquidation risk, treasury movement, and policy breaches cannot wait for end-of-day reporting.

This changes the buying decision. A dashboard product may answer a few investigative questions. It does not solve ingestion reliability, entity mapping, historical backfills, private data joins, or lineage controls for audit.

Why 2026 is different

By 2026, the debate is no longer whether blockchain data matters to the enterprise. The harder question is whether the organisation can convert chain activity into controlled, explainable metrics that stand up in operations and compliance.

That requirement shows up in several forms. Finance teams want token flow attribution that ties to books and treasury policy. Product teams want wallet retention, cohort behaviour, and contract usage mapped to customer journeys. Risk teams want near-real-time alerts across sanctioned exposure, bridge flows, mixer interaction, and abnormal contract behaviour. None of those outcomes come from raw node access alone.

A common misstep follows. Teams buy an analytics tool expecting an answer to an architecture problem. The usual result is partial coverage, duplicated pipelines, unresolved identity gaps, and a backlog of custom integration work that appears only after production traffic starts.

The rise of enterprise blockchain analytics is therefore less about market interest and more about system design. Organisations now need analytics stacks that treat blockchain as a first-class data source, while still meeting the standards expected of enterprise software: access control, reproducibility, auditability, and integration with the rest of the business.

Key Differences Between Blockchain Analytics and Traditional BI

A CTO evaluating a blockchain data analytics platform should start with one assumption. Blockchain analytics is not a subtype of traditional BI. It’s a parallel discipline with different source data, identity models, and operational constraints.

Teams that treat it as ordinary reporting usually discover the mismatch after launch, when compliance asks for traceability, product asks for wallet retention, and finance asks for token flow attribution.

Data source and structure

Traditional BI begins with systems of record that an enterprise owns. Tables are designed for business workflows. Fields map cleanly to entities like customer, order, invoice, or session.

Blockchain analytics begins with distributed ledgers and contract events. The source data is trustworthy in one sense because it’s immutable, but it’s also harder to use because it’s low-level and often unintuitive.

Here’s the practical contrast:

| Dimension | Traditional BI | Blockchain analytics |

|---|---|---|

| Primary source | Internal databases | Public and permissioned ledgers |

| Data format | Structured business records | Transactions, logs, contract events, state changes |

| Mutability | Records can be updated | Historical records are immutable |

| Refresh pattern | Batch or scheduled | Continuous block-driven ingestion |

| Entity model | User and account IDs | Wallets, contracts, protocols |

Identity is the hardest gap

In conventional analytics, identity resolution starts with known users. In on chain analytics enterprise systems, identity starts with addresses. That sounds small, but it changes almost everything.

A wallet doesn’t tell you whether the actor is a customer, a market maker, a treasury account, a bot, or a counterparty from another protocol. You need enrichment layers to classify addresses, infer relationships, and connect them to off-chain business context.

That’s why blockchain business intelligence usually requires:

- Address labelling for treasury, exchange, protocol, internal, and external entities

- Entity resolution rules that join wallet activity to app users where allowed

- Protocol-aware decoding so raw event signatures become useful business actions

- Risk scoring logic for compliance and operational triage

A generic BI warehouse won’t solve that on its own. An enterprise blockchain development company with data engineering capability usually has to design those identity and decoding layers deliberately.

Blockchain data is transparent, but transparency isn’t the same as understanding. Enterprises still need interpretation, attribution, and context.

Time, trust, and visibility

Traditional BI often optimises for historical analysis. Blockchain monitoring tools must support historical analysis and live detection at the same time.

That creates three design implications.

- Time sensitivity matters more because each new block can change risk posture, settlement status, or liquidity exposure.

- Trust boundaries are different because on-chain data may be public while off-chain enrichment remains private and access-controlled.

- Visibility is asymmetric because public chains expose transactions broadly, but permissioned chains and enterprise applications expose only selected states.

Why the stack must be hybrid

Most enterprises don’t need pure on-chain dashboards. They need a hybrid analytics system that can answer questions like:

- Which wallets belong to high-value customers?

- Which contract interactions indicate fraud risk?

- Which token transfers correspond to internal treasury operations?

- Which governance actions correlate with product participation?

- Which bridge flows create settlement or compliance exceptions?

That’s the reason enterprise web3 analytics platform design usually combines blockchain data pipeline engineering with more familiar warehousing, dashboarding, and alerting practices. The front end may look like BI. The back end is a different animal.

Critical Enterprise Use Cases for Blockchain Analytics

By 2026, enterprise blockchain analytics is less about public market visibility and more about operational control. The highest-value use cases appear where on-chain events must be reconciled with private customer data, treasury policy, compliance rules, and product KPIs that public dashboards cannot see.

That changes the enterprise question. The issue is rarely whether a transaction can be traced on-chain. The issue is whether a risk team, product team, or treasury desk can join that event to internal systems fast enough to act on it.

Transaction monitoring and fraud detection

This is often the first use case to get funded because the operating model is clear. Compliance teams need to screen wallets, investigate suspicious flows, and document why a transaction was approved, escalated, or blocked.

The hard part is not blockchain visibility. It is orchestration across systems with different latency and trust assumptions. A usable monitoring stack usually combines:

- Continuous wallet screening against sanctions, internal watchlists, and exposure policies

- Pattern detection for mixer interaction, rapid hop sequences, unusual bridge use, and contract-level anomalies

- Case management that links alerts to investigators, decisions, and evidence

- Reviewable audit logs that satisfy internal control and external examination requirements

For teams evaluating implementation patterns, on-demand KYT compliance solutions show how transaction screening can be embedded into operational workflows instead of isolated in a research tool.

Token economy analytics

Token analytics matters whenever token design affects treasury exposure, user incentives, reporting obligations, or redemption logic. Public dashboards can show supply and transfer counts. Enterprise teams need to know which movements represent market activity, internal allocation, vesting, collateral management, or policy exceptions.

That requires wallet attribution and governance-aware modeling. Without both, supply figures look precise while hiding concentration risk or treasury dependency.

Teams usually track:

- Velocity and holding periods across customer, investor, treasury, and contract cohorts

- Distribution concentration by beneficial owner or control group, not just wallet count

- Staking and unstaking pressure by participant segment

- Emissions and burns against documented protocol or treasury rules

This is especially relevant in real-world asset tokenization, where on-chain transfers must match off-chain ownership records, servicing events, and legal constraints.

User behaviour analytics

For product teams, blockchain analytics becomes useful only after wallet activity is translated into business events. A swap, stake, mint, vote, or bridge transaction is not a KPI. It becomes one after the event is mapped to onboarding, retention, monetization, or churn logic.

A strong model treats the chain as one telemetry source among several. It joins contract events with CRM records, campaign metadata, support tickets, KYC state, and account hierarchies. That is how a product team separates anonymous traffic from valuable customers and casual usage from high-intent behavior. The broader pattern is similar to other applications of data analytics, where the reporting layer matters less than the decisions it supports.

A practical dashboard often looks like this:

| Behaviour layer | On-chain signal | Business interpretation |

|---|---|---|

| Activation | First contract interaction | User completed core onboarding action |

| Repeat usage | Recurring wallet actions | Retention or habit formation |

| Value depth | Higher-value interactions | Premium customer or power user behaviour |

| Feature mix | Contract-level event patterns | Which product modules get real usage |

The architectural challenge is identity resolution. One customer may control multiple wallets. One wallet may appear across several apps, chains, or delegated actors. If that relationship model is weak, retention analysis and lifecycle segmentation become unreliable.

DAO governance insights

Governance analytics is often underbuilt because teams stop at vote counts and turnout percentages. Enterprises using governance in treasury, consortium coordination, or token-holder decision systems need a much stricter view.

The useful questions are about control, participation quality, and policy execution:

- Who participates consistently across proposal types and time periods

- Which voter blocs influence treasury or protocol changes

- How much voting power is concentrated among delegates, insiders, or coordinated groups

- Whether discussion channels and on-chain outcomes diverge in ways that suggest weak process integrity

This use case becomes more important when governance output affects legal entities, grants, treasury actions, or customer-facing policy. In those settings, analytics has to connect forum data, snapshot data, on-chain execution, and internal approval records. Governance tooling choices matter, but the main enterprise concern is whether proposals can be audited from discussion through execution.

A quick visual overview helps clarify how these use cases overlap in practice.

DeFi analytics

DeFi creates the widest gap between retail dashboards and enterprise requirements. A public dashboard can report TVL, volume, and yields. A treasury or risk team needs to understand dependency chains, liquidation paths, bridge exposure, stablecoin concentration, and the timing of settlement risk.

That usually means answering a narrower set of high-impact questions:

- Which pools, protocols, or bridges create concentrated counterparty exposure

- Where collateral impairment could propagate into treasury losses

- Which asset routes depend on contracts with weak security or governance controls

- How quickly abnormal withdrawals, oracle stress, or pool imbalance can be detected

The difficult part is classification. A single position may span LP tokens, staking wrappers, borrowed assets, and off-chain hedges. If the analytics layer treats each contract interaction as an isolated event, the enterprise view of exposure will be wrong. DeFi analytics therefore works best when protocol decoding, position modeling, and treasury policy are designed as one system.

Operator’s view: In DeFi, aggregate metrics often hide the actual risk. Concentration, timing, and dependency structure usually matter more than the headline total.

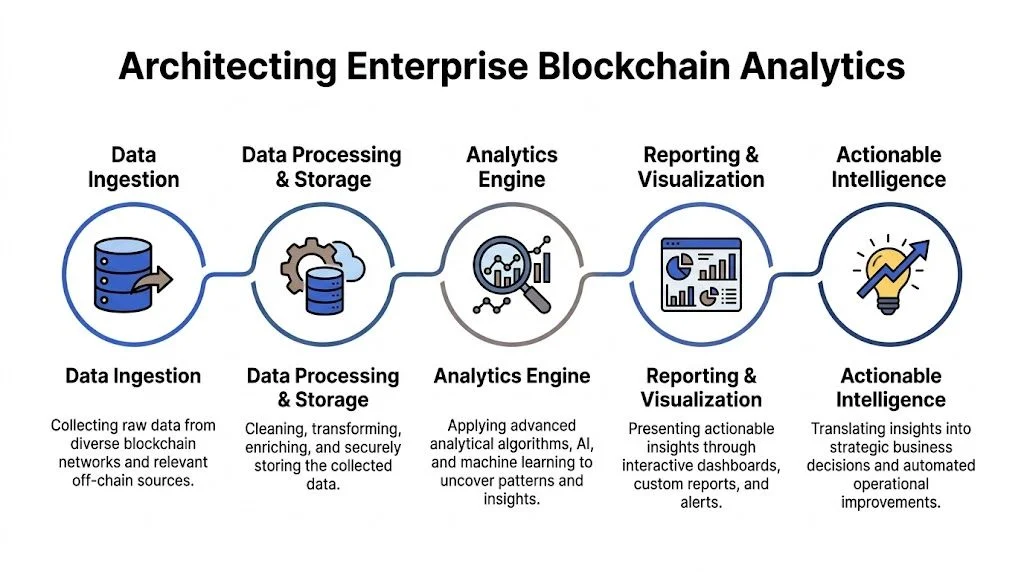

Architecting an Enterprise Blockchain Analytics System

Most failed analytics programmes don’t fail at the dashboard layer. They fail upstream, where ingestion is incomplete, indexing is protocol-blind, enrichment is weak, and off-chain joins are delayed or unreliable.

A durable architecture has to support low-level blockchain parsing and high-level business interpretation in the same pipeline.

Ingestion from on-chain and off-chain sources

The first decision is scope. Are you ingesting raw blocks from nodes, enriched data from third-party APIs, or both?

Node-level ingestion gives control and auditability, but it increases operational burden. API-based ingestion speeds delivery, but it can limit visibility into edge cases and create dependency risk.

The source model usually includes:

- On-chain inputs such as blocks, receipts, logs, traces, token transfers, and contract state

- Application inputs such as user records, KYC status, orders, treasury actions, and support cases

- Reference inputs such as address labels, protocol metadata, and risk categories

For enterprise systems, the right answer is rarely pure on-chain. It’s a hybrid data model.

Indexing and decoding

Raw blockchain data is not analytics-ready. Enterprises need indexers that know which contracts matter, which events to decode, and how to map technical emissions into business entities.

Teams choose between protocol frameworks such as The Graph and custom indexers built around their own contracts or transaction logic. The trade-off is speed versus control.

Use custom indexing when:

- contract logic is proprietary

- event semantics are business-specific

- compliance requires deeper traces

- you need deterministic handling of edge cases

Use managed indexing when:

- the protocol surface is standardised

- speed to first dashboard matters

- data requirements are broad rather than deep

India’s enterprise environment adds a performance requirement here. In 2026, enterprise blockchain analytics in India is using Layer 2 scaling on Ethereum and Polygon to support over 3,400 transactions per second aggregate throughput across major chains, reducing latency for compliance monitoring during DeFi trading and cross-chain swaps. The same source also notes Ethereum’s ~600k daily active addresses and $78B+ TVL, underlining why indexing has to be built for volume, not occasional analysis, according to Blocsys’ enterprise blockchain solutions 2026 overview.

ETL and storage design

Once indexed, the data still needs transformation. Many blockchain reporting tools stay too shallow. They decode events but don’t build a complete semantic layer.

A proper blockchain data pipeline should transform low-level records into business-ready models such as:

| Raw input | Transformation | Business-ready output |

|---|---|---|

| Transfer events | Wallet classification | Customer deposit activity |

| Contract logs | ABI decoding | Product feature usage |

| Bridge transactions | Path stitching | Cross-chain settlement flow |

| Staking records | Cohort enrichment | Retention and lock-up analysis |

Storage choices depend on query style. Warehouses handle historical analysis well. Stream systems support alerts and near-real-time state updates. Most enterprise stacks need both.

For teams designing resilient processing layers, standard cloud-based architecture principles are still relevant because blockchain analytics workloads have the same practical concerns as other data systems. Fault tolerance, scaling strategy, observability, and service isolation still matter.

Dashboards, alerting, and action loops

A dashboard is the last mile, not the architecture. The goal is to present business decisions at the right latency for the team consuming them.

Different functions need different views:

- Compliance teams need screening queues, exposure graphs, and investigation history

- Product teams need cohort retention, contract usage, and wallet journey views

- Treasury teams need inflow, outflow, liquidity, and concentration monitoring

- Governance teams need participation, delegate patterns, and proposal impact tracking

A good design principle is to separate operational dashboards from executive reporting. One is for action. The other is for interpretation.

The analytics stack also needs outbound triggers. Fraud flags should create cases. Liquidity anomalies should alert treasury. Governance thresholds should notify operators. If nothing happens when the metric changes, the metric probably doesn’t belong in the first release.

A practical reference for this architecture style is a dedicated data pipeline architecture approach, where ingestion, transformation, orchestration, and monitoring are treated as first-class system components rather than glue code.

Build the semantic layer before you polish the visual layer. Enterprises can tolerate an ugly dashboard longer than they can tolerate inconsistent truth.

Leading Blockchain Analytics Platforms and Companies

Off-the-shelf platforms are useful, but they solve different slices of the problem. Some specialise in compliance. Others focus on market intelligence or self-serve querying. Few handle private operational context well.

That matters because many enterprises don’t need “the best blockchain analytics tool”. They need the right blend of vendor capability, API access, and internal customisation.

One market gap keeps showing up in practice. In India, 78% of fintechs report unresolved oracle problems and data synchronisation gaps, which drive 25-30% higher compliance costs compared to global averages under PMLA and RBI-aligned workflows, according to FitGap’s enterprise blockchain analysis tools review. That’s a strong reminder that tool selection without integration planning usually underdelivers.

Comparison of Top Blockchain Analytics Platforms (2026)

| Platform | Primary Focus | Private Chain Support | API Access | Best For |

|---|---|---|---|---|

| Chainalysis | Compliance, investigations, transaction risk | Limited in typical public-chain workflows | Yes | AML, KYT, investigation teams |

| Elliptic | Compliance, wallet screening, risk intelligence | Limited in standard packaged workflows | Yes | Regulated transaction monitoring |

| TRM Labs | Risk intelligence, tracing, investigations | Limited in standard deployment models | Yes | Cross-chain tracing and compliance operations |

| Glassnode | Market and network intelligence | No typical focus | Yes | Treasury, market research, macro chain analysis |

| Nansen | Wallet intelligence and smart money tracking | No typical focus | Yes | Fund flows, wallet behaviour, ecosystem analysis |

| Dune Analytics | Community and analyst-led querying | No typical focus | Yes, with technical workflow constraints | Custom public-chain analysis |

| Messari | Research, protocol intelligence, market context | No typical focus | Yes | Strategy, ecosystem research, protocol benchmarking |

How to evaluate them as an enterprise buyer

A shortlist should be scored on fit, not popularity.

Compliance depth

Chainalysis, Elliptic, and TRM Labs are usually stronger where auditability, risk signals, and investigations matter most. They’re often a better starting point for regulated products than platforms built for market intelligence.

Flexibility for internal KPIs

Dune and Nansen are useful when teams need exploratory web3 data analytics, wallet pattern discovery, or bespoke protocol questions on public data. They’re less suitable as the entire analytics backbone for a private enterprise workflow.

Support for hybrid data models

Many blockchain analytics companies stop short. A CTO may still need to join KYC records, internal order books, treasury rules, governance permissions, or support logs to make the data useful. Vendor APIs help, but they rarely remove the integration burden.

Permissioned and private environments

If a system runs partly on permissioned infrastructure or uses enterprise middleware, public-chain-first tools won’t capture the full picture. In those cases, a custom blockchain intelligence platform or customized blockchain data analysis solutions become more practical than trying to force-fit a standard product.

Buy platforms for acceleration, not for absolution. A vendor can speed up tracing or enrichment, but it won’t remove your responsibility to define business entities, KPIs, and control logic.

How Blocsys Delivers Custom Enterprise Analytics

Enterprise blockchain programs fail at the data layer more often than at the protocol layer. The root cause is usually architectural. Teams buy a dashboard built for public wallet analysis, then discover they still need to join internal customer records, approval workflows, treasury policies, and private contract events before any metric becomes decision-ready.

Blocsys approaches analytics as part of the operating system of the product, not as a reporting add-on. That matters in enterprise settings where tokenisation, trading, governance, treasury, and compliance all generate different data models and different audit requirements. A retail-style dashboard can show activity. It cannot define entities, enforce internal controls, or produce the custom KPIs a finance, risk, or operations team uses.

Where custom systems outperform packaged tools

Custom delivery makes sense when the main problem is semantic and operational fit, not raw access to chain data.

Common triggers include:

- Business-specific KPI definitions. Enterprises rarely measure success with generic wallet, volume, or holder metrics alone. They need internal views such as treasury exposure by policy bucket, customer activity by verified entity, governance participation by permission class, or settlement exceptions by workflow state.

- Hybrid on-chain and off-chain processes. A transfer on-chain may only be one step in a larger business event that also includes KYC approval, pricing logic, support intervention, or reconciliation status in internal systems.

- Proprietary contracts and event logic. Standard decoders cover common protocols. They do not automatically reflect the meaning of custom events, internal roles, or product-specific state transitions.

- Embedded controls. Enterprise analytics must feed alerts, case queues, approvals, and reports inside operating systems already used by compliance, finance, and ops teams.

The implementation burden rises quickly once private and public data have to coexist. Identity resolution becomes a design problem. So does lineage. A CTO has to decide which fields become canonical, where sensitive joins are permitted, how replay and reprocessing work after contract upgrades, and which teams can see raw versus derived data. Those decisions shape the analytics system far more than charting or dashboard tooling.

What a custom enterprise analytics stack should deliver

A workable enterprise stack usually includes four layers.

| Capability | Why it matters |

|---|---|

| Custom KPI dashboards | Maps reporting to internal business rules, compliance thresholds, and executive decision criteria |

| Hybrid on-chain and off-chain analytics | Connects ledger activity with CRM, KYC, treasury, support, and settlement data |

| Smart contract-level tracking | Captures product behavior at event and state-transition level rather than only wallet summaries |

| Real-time monitoring and reporting infrastructure | Supports fraud review, compliance escalation, treasury oversight, and operational alerts |

The design principle is simple. Analytics should live close to the systems where action happens.

That often means integrating with:

- governance workflows and internal approval systems

- market infrastructure such as prediction market platform page

- traceability operations associated with custom Web3 platform development

- product environments that include raffle platform development

The result is less about visualization and more about control. A mature analytics layer converts blockchain events into reviewed entities, policy-aware metrics, and action paths inside the enterprise stack.

Build versus integrate

The strongest architecture is usually modular.

Use external vendors where the data is commoditized, such as public-chain enrichment, sanctions screening inputs, or broad risk signals. Build the semantic layer that maps contracts, wallets, counterparties, and internal records into business entities you trust. Keep dashboards, alerting logic, and workflow orchestration close to internal teams, because those components express your operating model and change most often.

This approach lowers lock-in and improves auditability. It also makes it easier to add predictive capabilities later, especially where teams are combining ledger data with operational context and machine-assisted decision support. That pattern is becoming more important as enterprises evaluate AI and blockchain integration for enterprise systems.

For Blocsys, custom enterprise analytics is not a prettier interface over chain data. It is a data architecture problem solved in production conditions, with private data boundaries, compliance constraints, and product-specific logic built into the system from the start.

Future Trends in Blockchain Analytics (2026-2028)

The next phase of enterprise blockchain analytics won’t be defined by prettier dashboards. It will be defined by systems that move from descriptive to predictive.

AI and blockchain analytics converge

As more teams combine structured ledger data with behavioural and operational context, AI models become more useful. The opportunity isn’t abstract “AI on blockchain”. It’s targeted inference.

That includes:

- suspicious activity prioritisation

- governance participation forecasting

- token flow anomaly detection

- treasury risk modelling across protocols and chains

This is one reason enterprise teams are paying more attention to AI and blockchain integration in 2026. The value comes from joining deterministic ledger records with probabilistic intelligence in a way humans can still audit.

Predictive token and governance models

Token economies create feedback loops. Emissions affect behaviour. Rewards affect retention. Governance changes affect liquidity and participation. Enterprises that can simulate these relationships before making policy changes will make fewer expensive mistakes.

The same applies to DAO operations. Governance intelligence is shifting from historical participation charts toward real-time interpretation of concentration, momentum, and proposal impact.

The likely architecture shift

The medium-term stack will look more like a continuous intelligence layer than a reporting layer.

Expect more:

- event-driven blockchain monitoring tools

- smart contract analytics tied directly to workflow automation

- decentralised data analytics blended with enterprise control systems

- blockchain data pipeline designs that support both compliance and product intelligence from the same source model

The winners won’t be the teams with the most dashboards. They’ll be the teams that connect blockchain data to operational decisions faster and more reliably than competitors do.

Blocsys Technologies helps fintechs, exchanges, digital asset businesses, and Web3 product teams design production-ready analytics systems that go beyond generic tools. If you need custom dashboards mapped to business KPIs, hybrid on-chain and off-chain intelligence, smart contract-level tracking, or real-time monitoring infrastructure for governance, trading, tokenisation, and compliance, connect with Blocsys Technologies. Get custom blockchain analytics solution or talk to our experts to plan the right architecture for your platform.